- #Install pyspark on ubuntu 18.04 with conda how to#

- #Install pyspark on ubuntu 18.04 with conda download#

If you want then, you can change the memory/ram allocated to the worker. Refresh the Web interface and you will see the Worker ID and the amount of memory allocated to it:

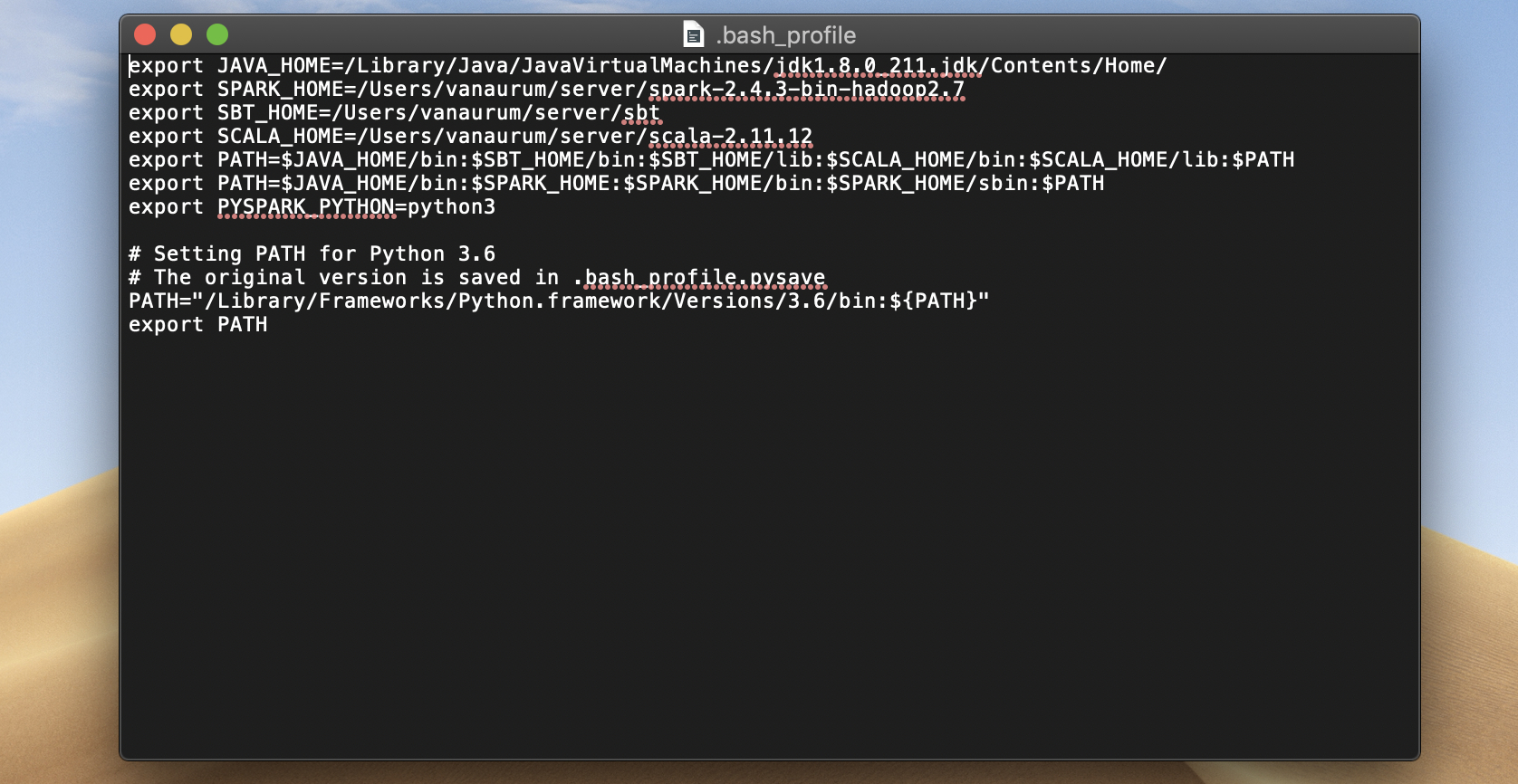

So, as our hostname is ubuntu, the command will be like this: start-worker.sh spark://ubuntu:7077 Where the default port of master is running on is 7077, you can see in the above screenshot. If you don’ know your hostname then simply type- hostname in terminal. In the above command change the hostname and port. To run Spark slave worker, we have to initiate its script available in the directory we have copied in /opt. This will allow you to access the Spark web interface remotely at – sudo ufw allow 8080 If you are using a CLI server and want to use the browser of the other system that can access the server Ip-address, for that first open 8080 in the firewall. Our master is running at spark:// Ubuntu:7077, where Ubuntu is the system hostname and could be different in your case. Now, let’s access the web interface of the Spark master server that running at port number 8080. Access Spark Master (spark://Ubuntu:7077) – Web interface –webui-port – Port for web UI (default: 8080 for master, 8081 for worker)Įxample– I want to run Spark web UI on 8082, and make it listen to port 7072 then the command to start it will be like this: start-master.sh -port 7072 -webui-port 8082Ħ. –port – Port for service to listen on (default: 7077 for master, random for worker) If you want to use a custom port then that is possible to use, options or arguments given below. Start Apache Spark master server on UbuntuĪs we already have configured variable environment for Spark, now let’s start its standalone master server by running its script: start-master.shĬhange Spark Master Web UI and Listen Port (optional, use only if require) This allows us to run its commands from anywhere in the terminal regardless of which directory we are in.Įcho "export SPARK_HOME=/opt/spark" > ~/.bashrcĮcho "export PATH=$PATH:$SPARK_HOME/bin:$SPARK_HOME/sbin" > ~/.bashrcĮcho "export PYSPARK_PYTHON=/usr/bin/python3" > ~/.bashrcĥ. To solve this, we configure environment variables for Spark by adding its home paths to the system’s a profile/bashrc file. Now, as we have moved the file to /opt directory, to run the Spark command in the terminal we have to mention its whole path every time which is annoying.

sudo mkdir /opt/spark sudo tar -xf spark*.tgz -C /opt/spark -strip-component 1Īlso, change the permission of the folder, so that Spark can write inside it. To make sure we don’t delete the extracted folder accidentally, let’s place it somewhere safe i.e /opt directory.

#Install pyspark on ubuntu 18.04 with conda download#

Simply copy the download link of this tool and use it with wget or directly download on your system. Hence, here we are downloading the same, in case it is different when you are performing the Spark installation on your Ubuntu system, go for that. However, while writing this tutorial the latest version was 3.1.2. Now, visit the Spark official website and download the latest available version of it. Here we are installing the latest available version of Jave that is the requirement of Apache Spark along with some other things – Git and Scala to extend its capabilities.

The steps are given here can be used for other Ubuntu versions such as 21.04/18.04, including on Linux Mint, Debian, and similar Linux.

Steps for Apache Spark Installation on Ubuntu 20.04 Start Apache Spark master server on Ubuntu

#Install pyspark on ubuntu 18.04 with conda how to#

Here we will see how to install Apache Spark on Ubuntu 20.04 or 18.04, the commands will be applicable for Linux Mint, Debian and other similar Linux systems.Īpache Spark is a general-purpose data processing tool called a data processing engine.